From Source Code to Public Availability

As user of n98-magerun you should be familiar with the easy self-upgrade command which is embedded in the tool. What’s not well known is the process behind any release of a new version. Since the tool is used in critical production environments, we added more and more steps to increase the stability and quality. This blog post describes who we release a new version.

Quality Assurance (QA)

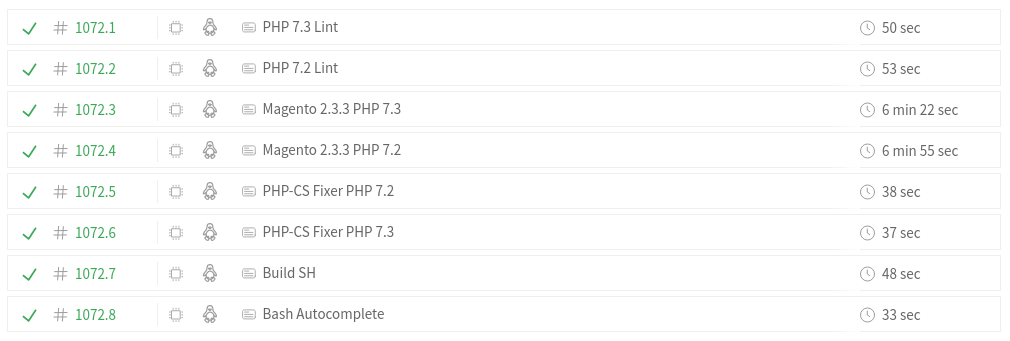

If a new Pull Request on Github arrives or a new commit is pushed to develop or master branch, we start a Travis build job.

Travis is a public available cloud CI-Server which is free for Open Source projects like n98-magerun. Travis itself is also an Open Source tool. Travis offers a paid plan for private projects. The company is based in Germany. So data protection according to GDRP is solved if you are from European Union. We use this service for free since years. So it’s fair to talk about Travis and their offered services.

https://travis-ci.org/netz98/n98-magerun2

If you are interested to see how the QA is configured… Have a look in the source code of our Github repo. The main entrypoint is the .travis.yml file.

The main purpose of the Travis jobs is to run automated tests against different Magento main versions to see if something breaks or is not compatible anymore.

Continuous Delivery (CI-CD)

I am a big fan of automation. Automation increases the quality as well. Any boring job which has to be done all the time should be automated. If something goes wrong, take it positive. This give you the change to optimize the automation process.

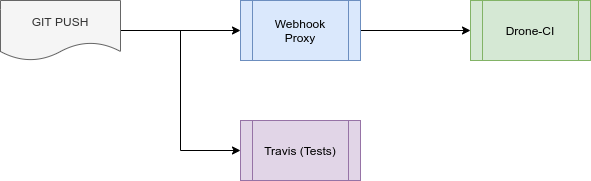

Any automation is triggered by a Github webhooks.

We use webhooks to start:

- Packagist updates

- Travis-CI pipeline

- Drone-CI pipeline

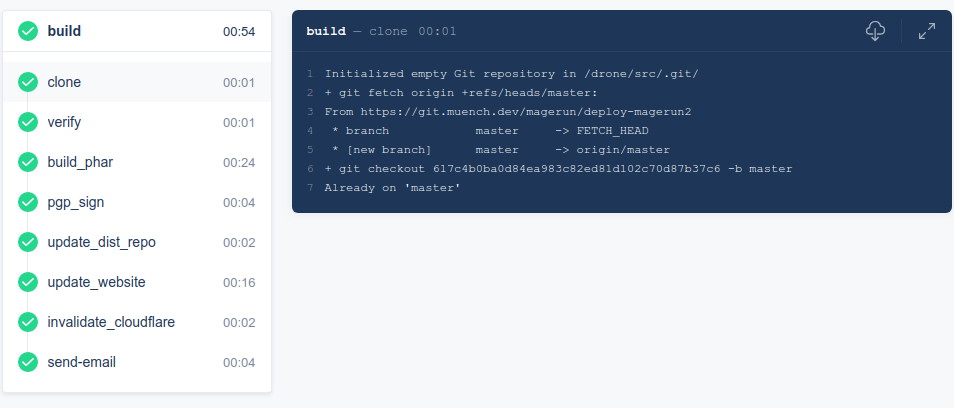

As written before, we use Travis to run the tests. For new releases, we start a Drone-CI pipeline which creates a new phar file. The phar file will be signed via PGP. Then we upload the new phar file to the download portal and publish a new phar distribution as Composer package. Then we update the website with new version information, create a phive version, invalidate the CDN and send a success or failure E-Mail to notify me.

Infrastructure

To receive data of a Github webhook, I wrote a small little tool in golang. It runs in a small Docker container and uses only ~13 Mbyte of memory. The purpose of the webhook proxy is to trigger a Drone-CI builds if necessary. There is a small piece of business logic which decides if a build should be triggered. We trigger a build if develop or a tag are pushed. If the develop branch is pushed, we create a “unstable” development version. If a tag is pushed, we create a new stable release.

I really like Drone-CI. For me the concept of Drone-CI is very lightweight and has the most integration of Docker to extend the pipeline by plugins.

Some month before we used Gitlab as CI. With drone-ci it was possible to speed up the process a lot. The complete build runs <1 minuten. We are so fast, because Drone-CI shares the “artifact folder” as Docker volume mount between all jobs in a pipeline.

Download Portal

The download portal is very important for you. This website is the place where we host all the phar files. Every download or update will be get the data from this website.

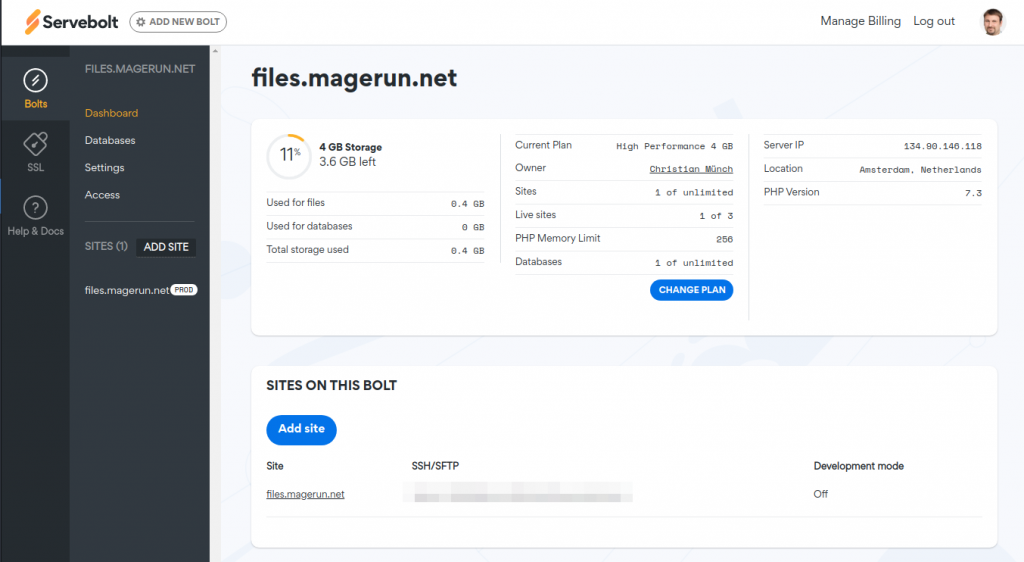

Last week, we got a request by Servebolt. They offered us a sponsorship for this critical infrastructure. That’s a really great news for all of us.

During some hours we transferred everything into a cloud hosted, fully managed environment. The complete infrastructure is now managed via the backoffice of Servebolt. We have a simple PHP project but the company also offers special Magento hosting templates.

The complete setup and transfer of the website took only some hours. I used the interactive customer chat of Servebolt for interaction. The response times were really good.

Cloudflare CDN

The Servebolt sponsoring comes with a Cloudflare CDN integration. Servebolt is a official Cloudflare partner and so we use this service to cache all the phar files. This gives you the main adavantage to download the phar files from nearest server location.

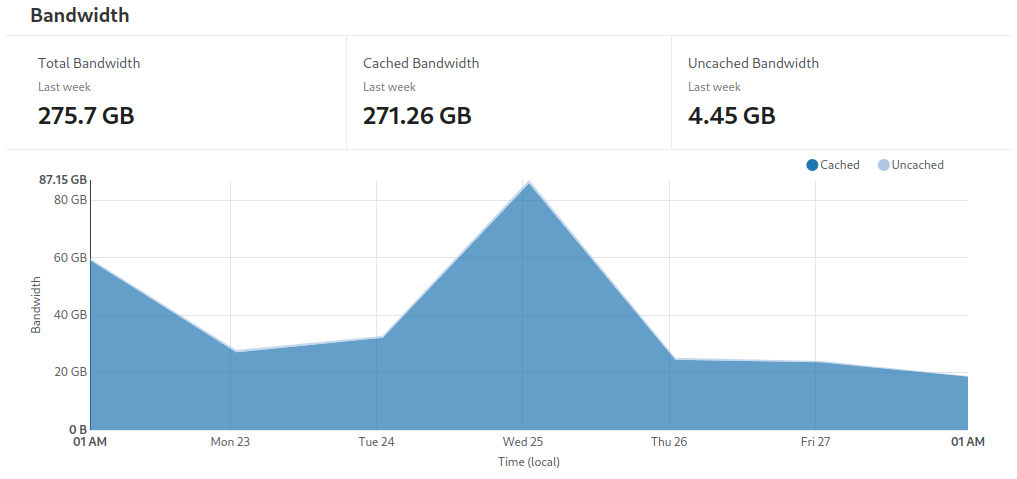

The picture below show how much traffic we have with downloads. Most of the 271 GB/per week are phar files.

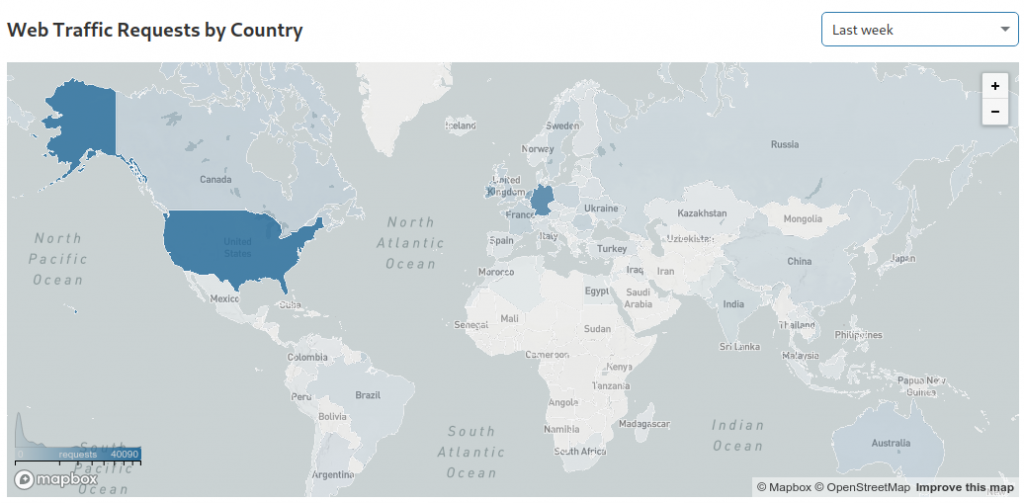

It’s also interesting to see where most of the downloads come from.

Thanks

At this point we want to thank all the sponsors behind the tool.

0 Comments